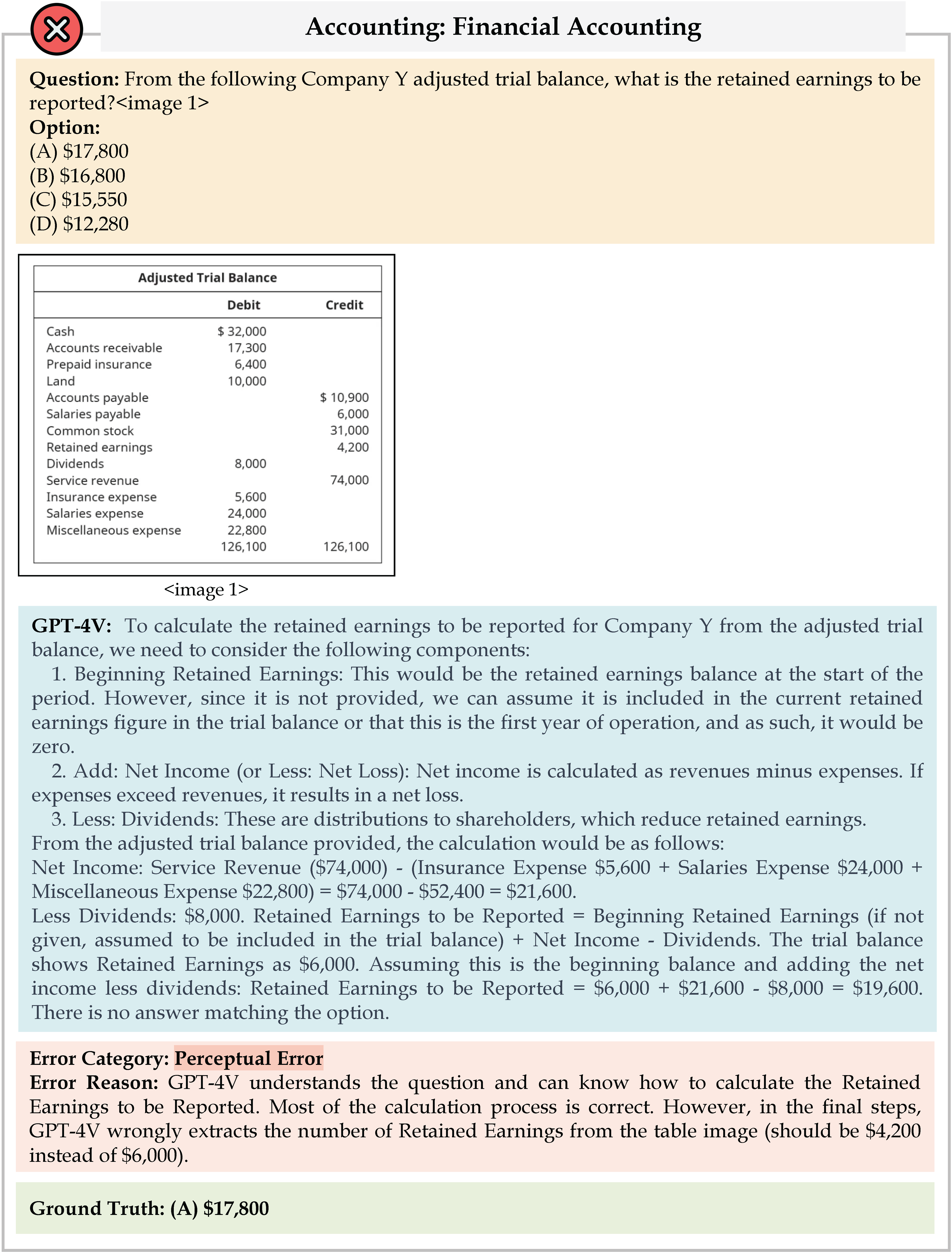

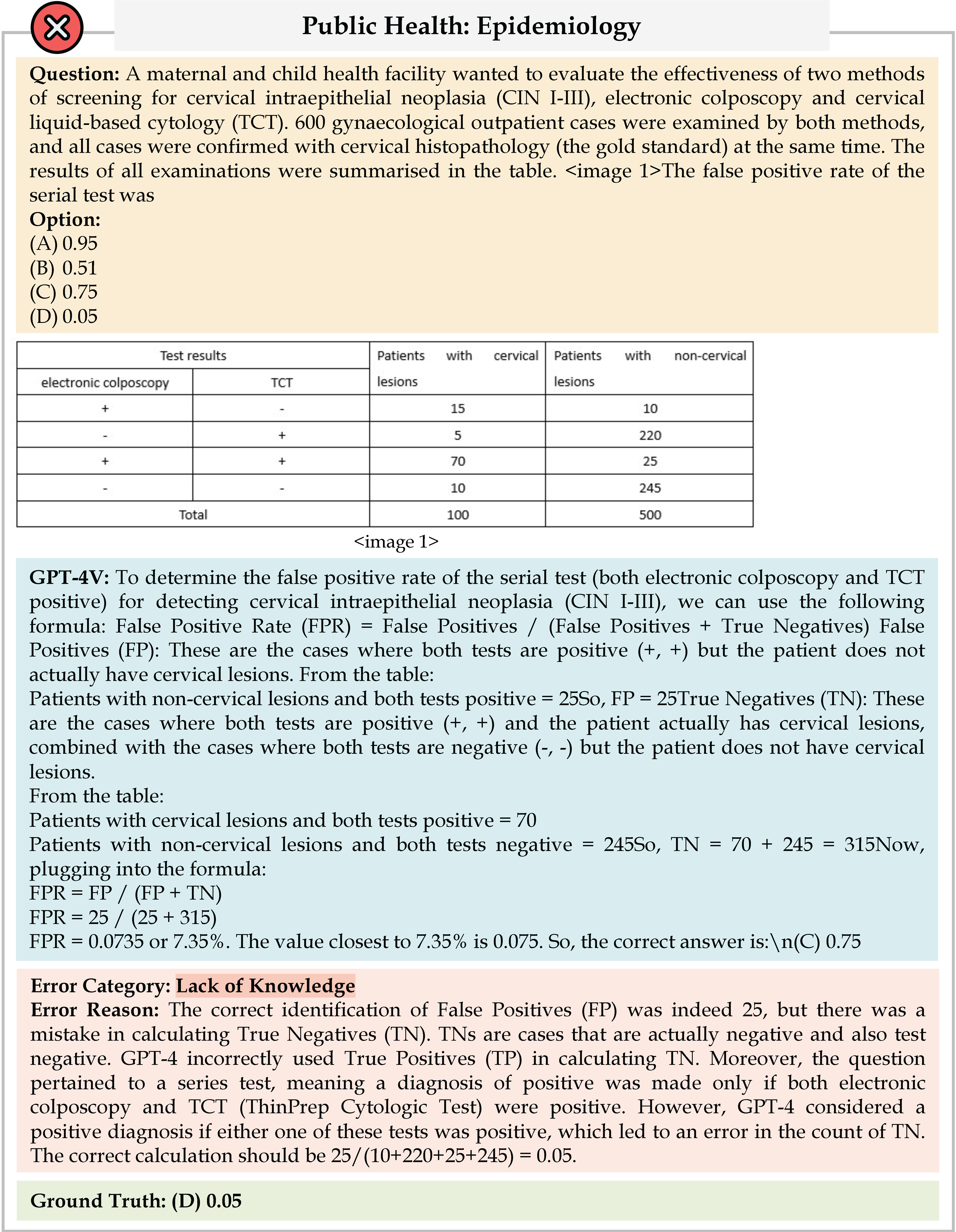

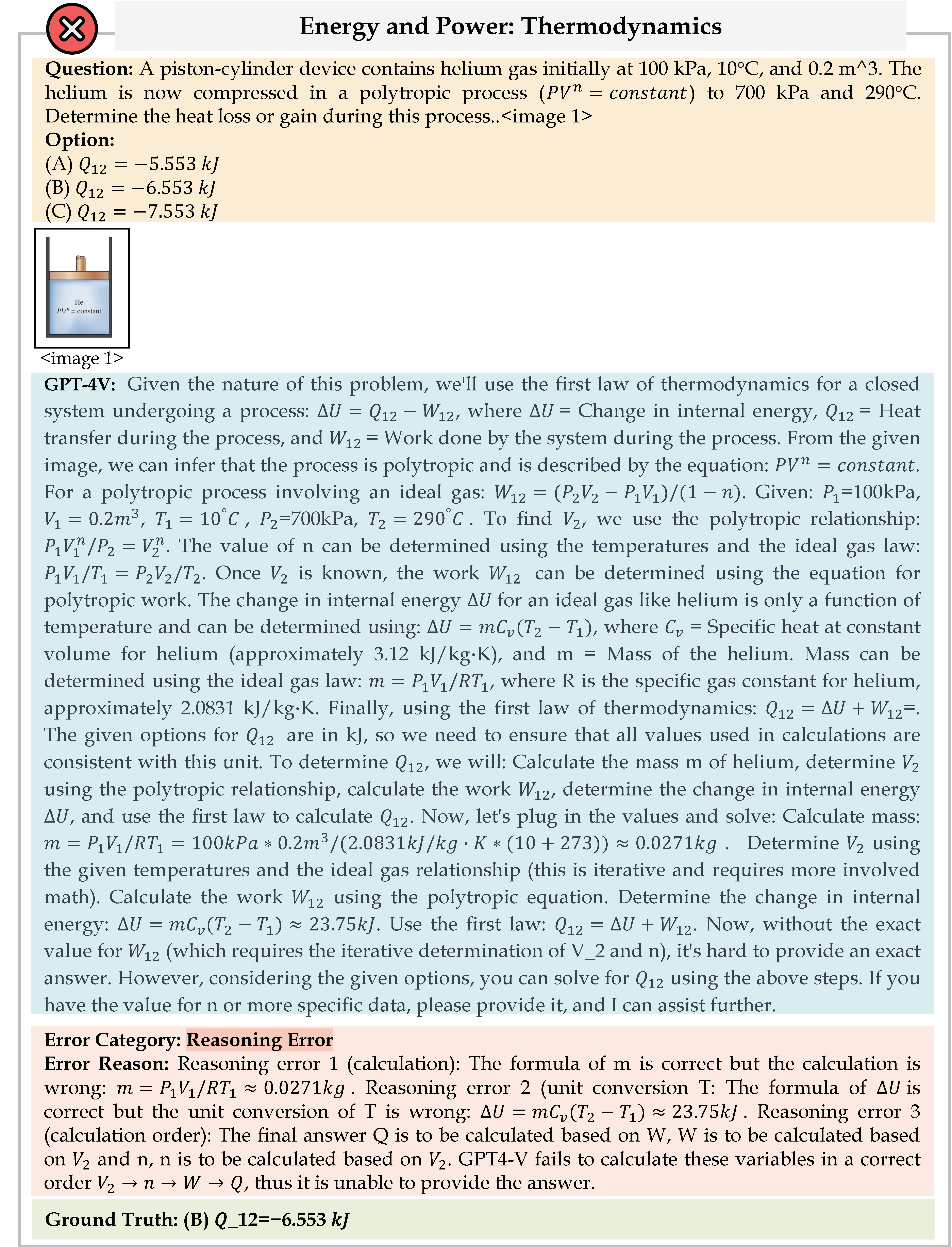

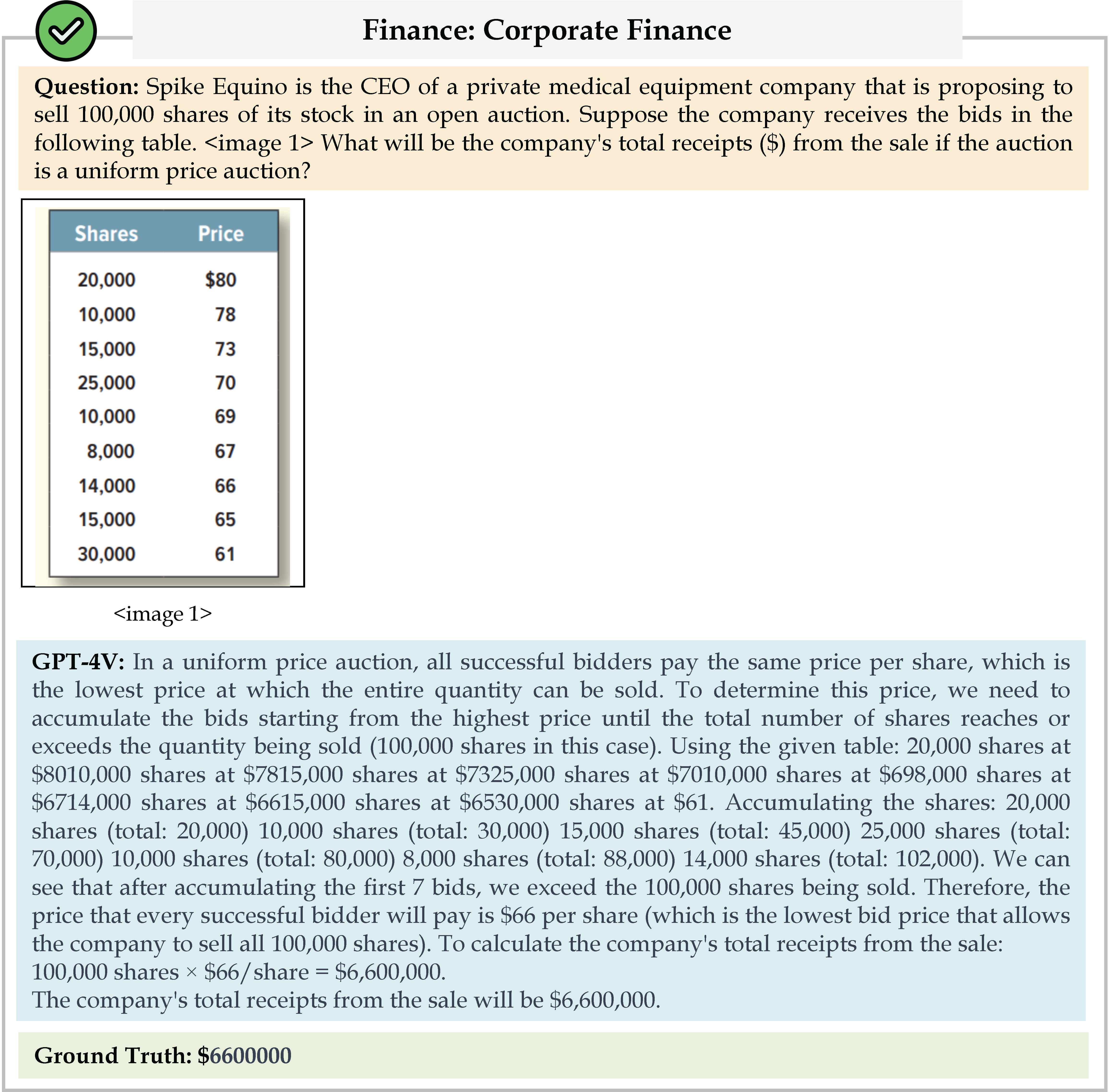

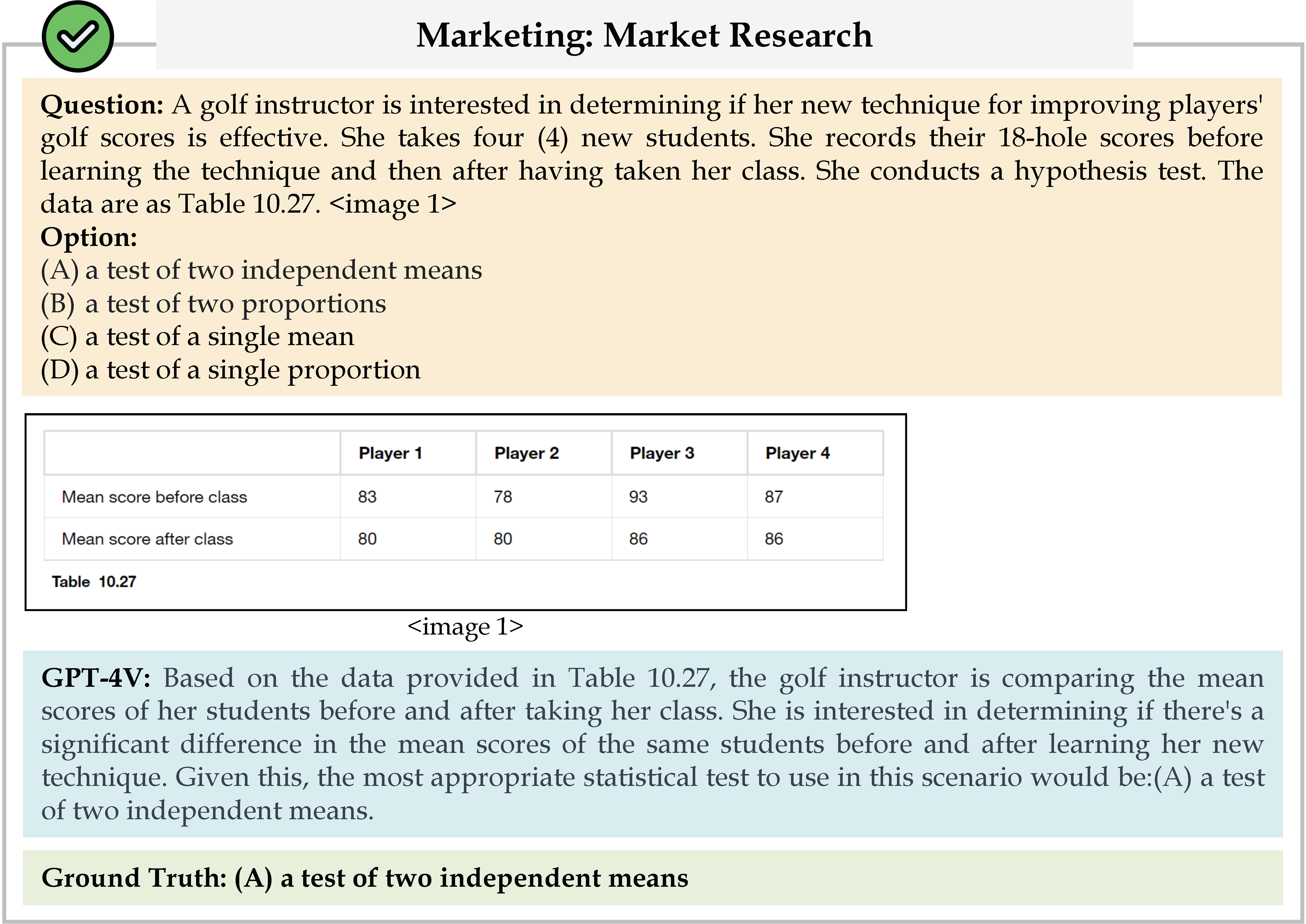

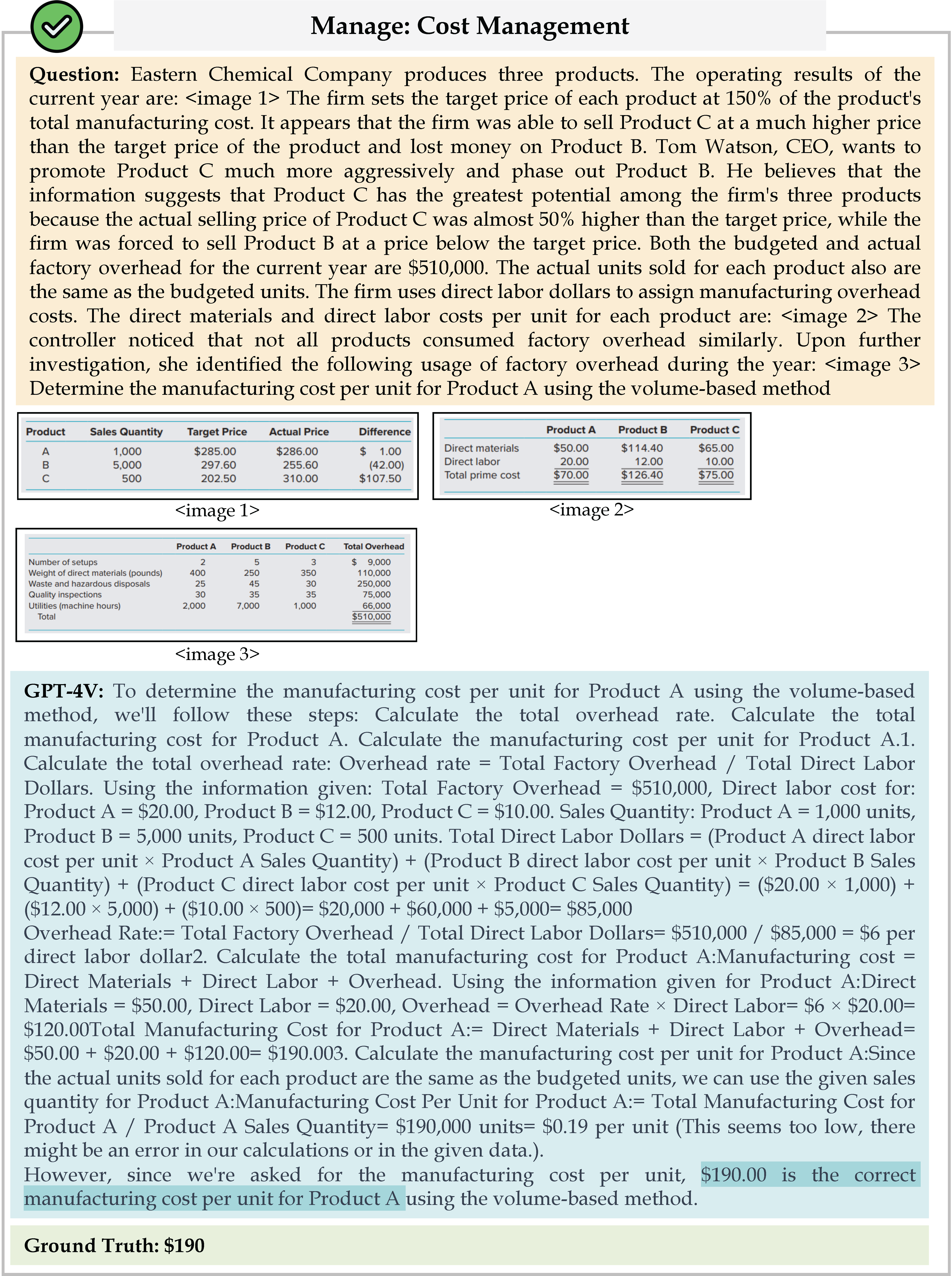

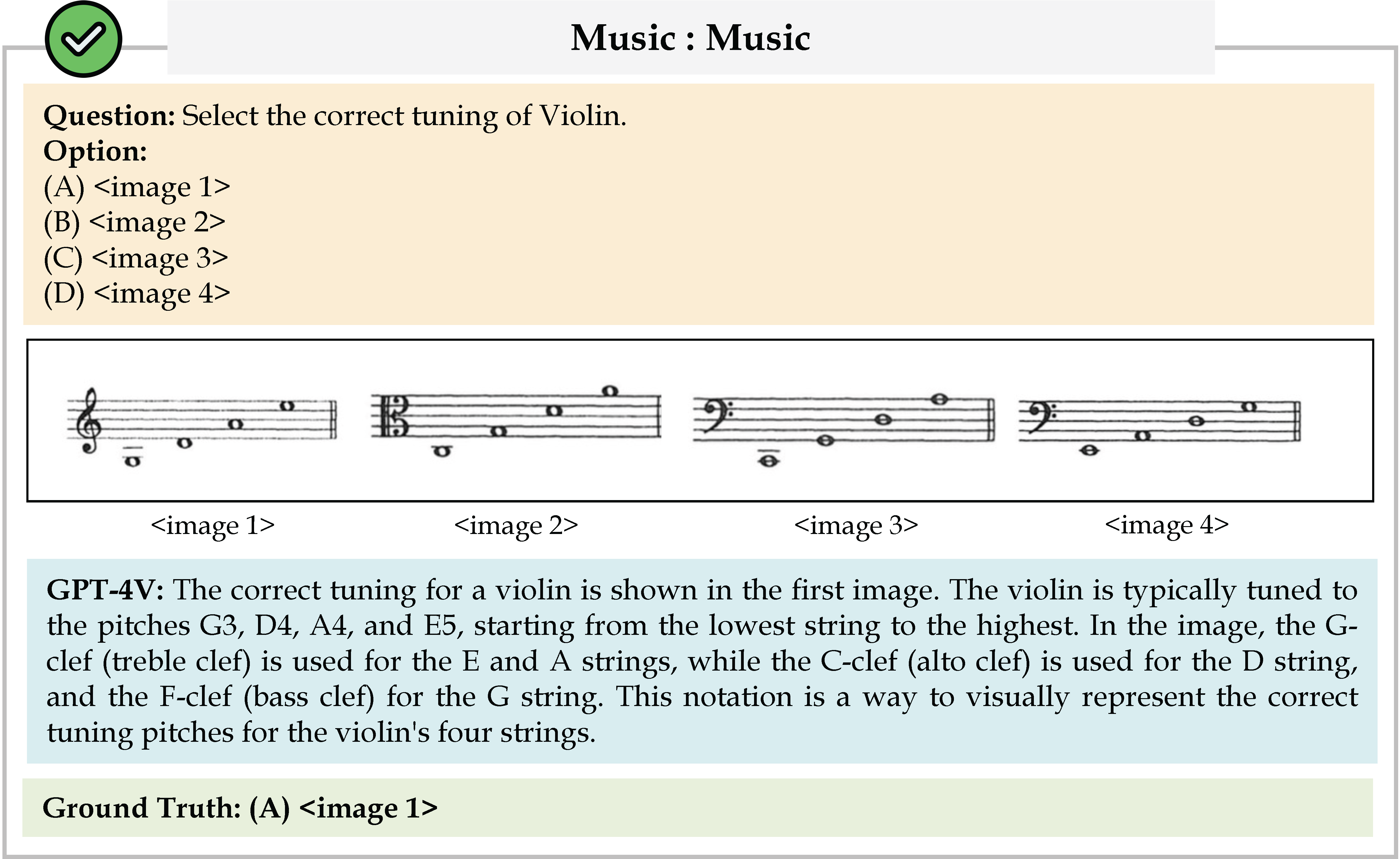

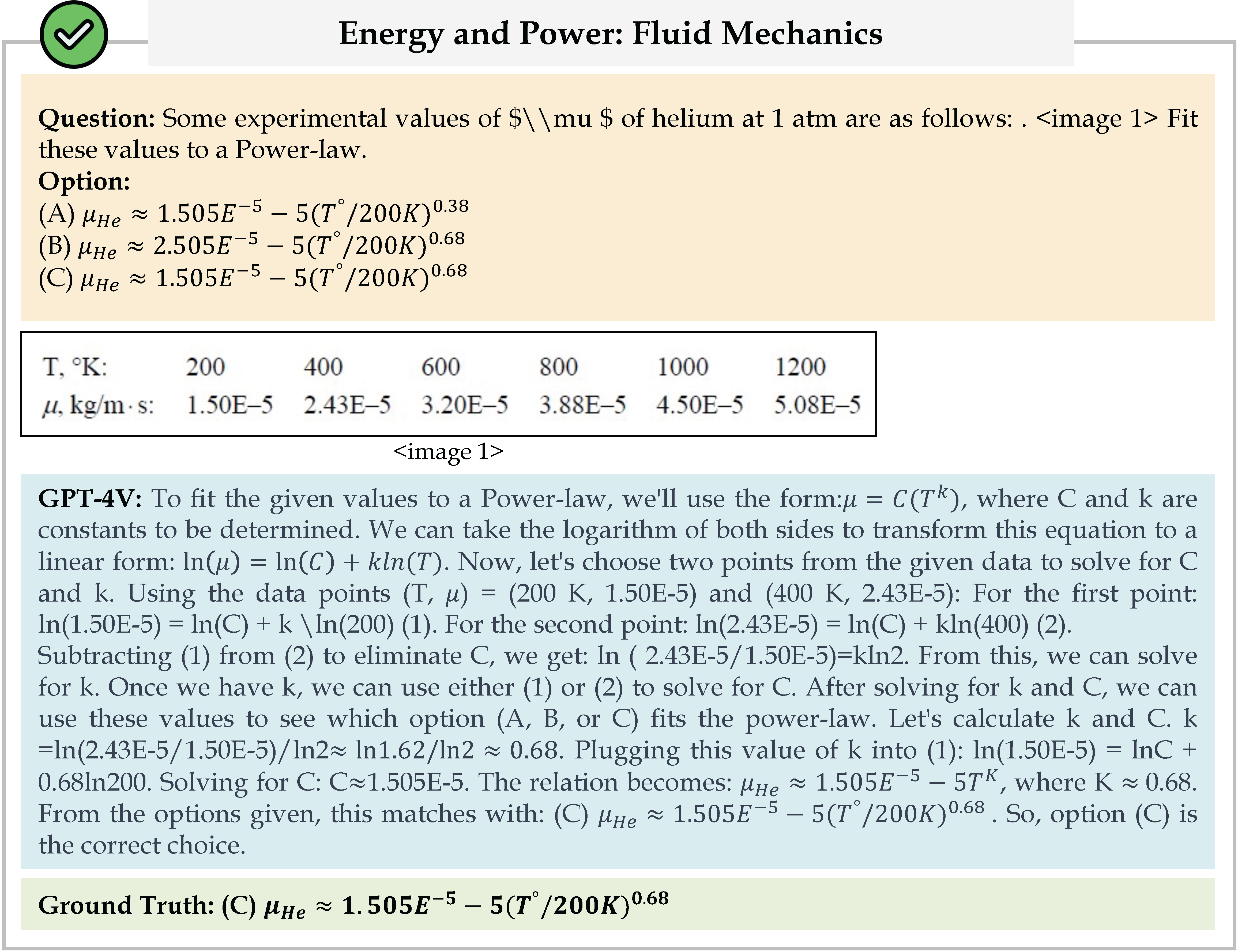

We evaluate various models including LLMs and LMMs. In each type, we consider both closed- and open-source models. Our evaluation is conducted under a zero-shot setting to assess the capability of models to generate accurate answers without fine-tuning or few-shot demonstrations on our benchmark. For all models, we use the default prompt provided by each model for multi-choice or open QA, if available. If models do not provide prompts for task types in MMMU, we conduct prompt engineering on the validation set and use the most effective prompt for the later zero-shot experiment.

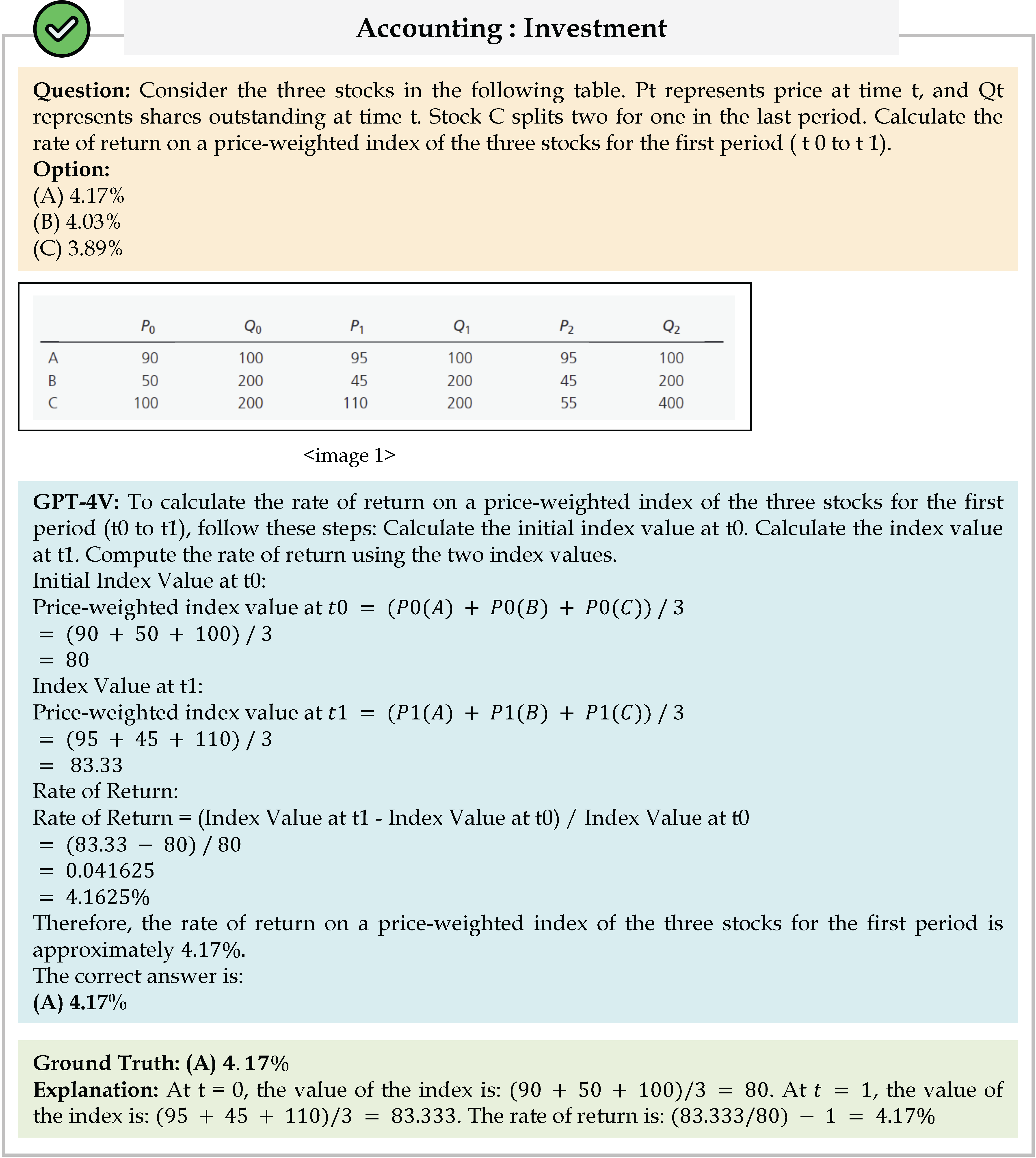

| Reset | Overall | Art & Design | Business | Science | Health & Medicine | Human. & Social Sci. | Tech & Eng. |

| Human Expert (Best) | 88.6 | 89.2 | 90.7 | 90.0 | 87.3 | 89.2 | 86.2 |

| Human Expert (Medium) | 82.6 | 84.2 | 86.0 | 84.7 | 78.8 | 85.0 | 79.1 |

| Human Expert (Worst) | 76.2 | 80.8 | 78.0 | 78.0 | 73.3 | 74.2 | 74.3 |

| Gemini Ultra* | 59.4 | 70.0 | 56.7 | 48.0 | 67.3 | 78.3 | 47.1 |

| Claude 3 Opus* | 59.4 | 67.5 | 67.2 | 48.9 | 61.1 | 70.0 | 50.6 |

| GPT-4V(ision) (Playground) | 56.8 | 65.8 | 59.3 | 54.7 | 64.7 | 72.5 | 36.7 |

| Reka Core* | 56.3 | 75.9 | 47.3 | 49.3 | 58.0 | 75.0 | 44.2 |

| SenseChat-Vision-0423-Preview* | 54.6 | 66.7 | 54.0 | 45.3 | 53.3 | 75.0 | 43.8 |

| Reka Flash* | 53.3 | 61.7 | 42.7 | 47.3 | 59.3 | 74.2 | 44.3 |

| Claude 3 Sonnet* | 53.1 | 61.7 | 58.2 | 37.1 | 57.1 | 68.7 | 45.0 |

| HPT Pro* | 52.0 | 66.7 | 43.3 | 42.7 | 50.7 | 72.5 | 43.8 |

| VILA1.5* | 51.9 | 60.8 | 43.3 | 36.0 | 57.3 | 73.3 | 48.1 |

| InternVL-Chat-V1.2* | 51.6 | 62.5 | 40.7 | 39.3 | 58.7 | 70.0 | 46.2 |

| Qwen-VL-MAX* | 51.4 | 72.5 | 43.3 | 40.0 | 58.0 | 69.2 | 38.6 |

| LLaVA-1.6-34B * | 51.1 | 67.5 | 46.0 | 39.3 | 52.0 | 67.5 | 43.8 |

| Claude 3 Haiku* | 50.2 | 60.8 | 52.5 | 37.1 | 52.3 | 66.0 | 41.5 |

| Adept Fuyu-Heavy* | 48.3 | 53.4 | 46.3 | 33.7 | 51.3 | 72.2 | 44.0 |

| Gemini Pro* | 47.9 | - | - | - | - | - | - |

| Marco-VL-Plus* | 46.2 | 60.8 | 37.3 | 35.3 | 48.7 | 69.2 | 37.1 |

| Yi-VL-34B* | 45.9 | 59.2 | 36.0 | 33.3 | 51.3 | 62.5 | 41.0 |

| Qwen-VL-PLUS* | 45.2 | 60.0 | 35.3 | 37.3 | 46.7 | 65.8 | 36.7 |

| HPT Air* | 44.0 | 63.3 | 31.3 | 34.7 | 45.3 | 59.2 | 42.9 |

| InternLM-XComposer2-VL* | 43.0 | 60.0 | 34.0 | 34.7 | 46.0 | 62.5 | 32.4 |

| Reka Edge* | 42.8 | 52.5 | 36.0 | 42.7 | 41.3 | 59.2 | 33.8 |

| Marco-VL* | 41.2 | 57.5 | 30.0 | 28.0 | 45.3 | 65.8 | 32.4 |

| OmniLMM-12B* | 41.1 | 58.3 | 34.0 | 27.3 | 44.0 | 62.5 | 31.9 |

| InfiMM-Zephyr-7B* | 39.4 | 55.8 | 28.0 | 33.3 | 42.7 | 59.2 | 29.0 |

| Yi-VL-6B* | 39.1 | 52.5 | 30.7 | 31.3 | 38.0 | 53.3 | 35.7 |

| InternVL-Chat-V1.1* | 39.1 | 56.7 | 34.7 | 31.3 | 39.3 | 57.5 | 27.1 |

| Bunny-3B* | 38.2 | 49.2 | 30.7 | 30.7 | 40.7 | 45.0 | 37.1 |

| SVIT* | 38.0 | 52.5 | 27.3 | 28.0 | 42.0 | 51.7 | 33.8 |

| MiniCPM-V* | 37.2 | 55.8 | 33.3 | 28.0 | 32.7 | 58.3 | 27.1 |

| MiniCPM-V-2* | 37.1 | 63.3 | 28.7 | 30.0 | 30.0 | 56.7 | 27.1 |

| LLaVA-1.5-13B | 36.4 | 51.7 | 22.7 | 29.3 | 38.7 | 53.3 | 31.4 |

| Emu2-Chat* | 36.3 | 55.0 | 30.0 | 28.7 | 28.7 | 46.7 | 35.2 |

| Qwen-VL-7B-Chat | 35.9 | 51.7 | 29.3 | 29.3 | 33.3 | 45.0 | 32.9 |

| InstructBLIP-T5-XXL | 35.7 | 44.2 | 24.0 | 30.7 | 35.3 | 49.2 | 35.2 |

| BLIP-2 FLAN-T5-XXL | 35.4 | 41.7 | 30.0 | 34.7 | 32.0 | 50.8 | 30.0 |

| BLIP-2 FLAN-T5-XL | 34.4 | 44.2 | 26.7 | 30.7 | 35.3 | 50.0 | 27.6 |

| InstructBLIP-T5-XL | 32.9 | 40.0 | 28.0 | 32.7 | 28.7 | 47.5 | 27.1 |

| SPHINX* | 32.9 | 48.3 | 24.7 | 26.7 | 30.7 | 50.0 | 26.2 |

| mPLUG-OWL2* | 32.7 | 45.8 | 24.7 | 22.7 | 32.0 | 45.8 | 31.0 |

| Gemini Nano2* | 32.6 | - | - | - | - | - | |

| Otter | 32.2 | 37.5 | 24.0 | 34.7 | 30.7 | 41.7 | 29.0 |

| CogVLM | 32.1 | 40.8 | 25.3 | 28.0 | 32.0 | 45.0 | 27.6 |

| LLaMA-Adapter2-7B | 29.8 | 29.2 | 25.3 | 30.7 | 30.7 | 33.3 | 30.0 |

| OpenFlamingo2-9B | 28.7 | 40.0 | 28.0 | 23.3 | 27.3 | 30.8 | 26.2 |

| Adept Fuyu-8B | 27.9 | 36.7 | 32.0 | 22.0 | 28.0 | 32.5 | 21.4 |

| MiniGPT4-Vicuna-13B | 26.8 | 29.2 | 21.3 | 28.7 | 30.7 | 29.2 | 23.8 |

| Frequent Choice | 26.8 | 23.3 | 29.3 | 27.3 | 30.0 | 25.8 | 24.8 |

| Kosmos2 | 24.4 | 25.0 | 18.0 | 19.3 | 28.0 | 30.0 | 26.7 |

| Random Choice | 22.1 | 29.2 | 24.7 | 18.0 | 20.7 | 20.0 | 21.4 |

Overall results of different models on the MMMU test set. The best-performing model in each category is in-bold, and the second best is underlined. *: results provided by the authors.